6. A proposal for ambivalent semantics¶

Every piece of information (document, knowledge base, etc.) can be interpreted in various ways. The works presented in this dissertation aim at taking into account, or even take advantage of this ambivalence. In contrast, many works in computer science, especially in the field of knowledge representation, consider that a formal description must have only one valid interpretation. This constraint is meant to guarantee the consistency of how that description is processed, and interoperability of the tools processing it. This view seems to conflate ambiguity with ambivalence, the former being obviously to avoid, but not at the cost of the latter.

In this chapter, I propose an alternative point of view on the notion of semantics, in an attempt to formalize ambivalence rather than exclude it.

6.1. Terminology¶

Language and sentences¶

We set this proposal in the context of language theory. We define a language as a set of sentences. The sentences of a language are not atomic elements, but can be described as a combination of terms, taken from a set called the vocabulary of that language. Unlike classical language theory, we are not limiting the structure of sentence to sequences of terms: we include for example in our proposal tree grammars (Nivat and Podelski 1992) and graph grammars (Rozenberg 1997). This inclusive definition of languages allows us to capture data actually processed by machines (sequences of discrete symbols) as well as more abstract structures represented by these data.

Here are a few examples of languages:

Every set of terms can be considered as a trivial language (where each sentence is made of a single term); for example, the language of boolean values or the language of all integers.

The language of all unicode strings: its vocabulary is the set of all unicode characters (The Unicode Consortium 2016), its sentences are finite sequences of those characters.

The language of XML trees: its vocabulary is the set of unicode strings, its sentences are partially ordered trees, whose nodes are typed (element, attribute, text or comment) and labelled with terms of the vocabulary – with constraints on the strings labeling certain types of nodes (Cowan and Tobin 2004).

The language of RDF graphs: its vocabulary is the set of all IRIs, literals a blank node identifiers; its sentences are graphs whose nodes are labelled by terms, and whose arcs are labelled with IRIs (Schreiber and Raimond 2014).

Note also that a language can be defined as a subset of another language. For example:

the Python programming language is a subset of the language of unicode strings;

the language XHTML (Pemberton 2000) is a subset of the language of XML trees.

Meaning and interpretation¶

To talk about the semantics of languages, we first define the notions of meaning and interpretation.

The meaning of a sentence is a non-formal property that is ascribed to that sentence by an external (human) observer. Such an extrinsic meaning can therefore not be unique for a given sentence: it depends on the observer, on their situation, etc. We notice incidentally that the term “meaning” itself carries a notion of intention (as in “I didn’t mean to do that”), and that this proximity can also be found it its french translation vouloir dire (literally “to want to say”).

We call interpretation of a language \(L\) a partial function from \(L\) to another language \(L'\). The inverse relation of an interpretation is sometimes called a representation: if \(I: L → L'\) is an interpretation function, and \(x\) is a sentence of \(L\), then \(y = I(x)\) is the interpretation of \(x\) under \(I\), and \(x\) is a representation1 of \(y\) under \(I\). This definition is extremely general, and as any generalization, it is only interesting withing certain limits: although in principle any partial function could be considered an interpretation, we will use this term only for those functions that aim at capturing some meaning of the sentences to which it applies.

Although related, those notions have important differences. An interpretation function is by definition unambiguous: it associates at most a single interpretation to each sentence of its domain language (or none at all for some sentences, as it may be a partial function). On the other hand, we have seen that an sentence can have several meanings, depending on the agent interpreting it and their context, and many of those meanings can only be reached through multiple levels of interpretations. Those differences account for the ambiguity of the term “semantics”, used to denote meaning or interpretation depending on the context.

6.2. Syntax and semantics¶

Since the seminal works of Chomsky (1957), it is customary to define a formal language through its syntax and its semantics. Syntax is meant to discriminate, among a set of possible sentences, those that belong to the language being defined. Those valid sentences will then be interpreted thanks to the semantics. In other words, syntax focuses on the form, while semantics focuses on the content. Therefore it seems that syntax and semantics, while being intimately linked, are orthogonal to each other. But things are not as clean-cut.

Languages with no semantics?¶

XML (Bray et al. 1998; 2008) is a recommendation aiming to provide an interoperable syntax for exchanging digital documents, without presuming of the meaning ascribed to these documents, nor of their internal representation in the programs exchanging them. This intended agnosticism is the reason why the specification only addresses syntactic aspects (i.e. how to decide whether an XML document is well-formed2 or not). This has lead many people to consider that XML was purely a syntax, with no semantics.

This is however an over-simplification. The syntactic constraints imposed by XML would be pointless if they didn’t allow a common interpretation of XML documents. That confusion can be attributed to two facts. First, this common interpretation exists but it is described in a separate recommendation, namely the Document Object Model (DOM) (Lauren Wood 1998), giving the impression that it is not an essential part of XML. Second, the DOM recommendation describes only indirectly the standard interpretation of XML documents: with the aim to stay neutral with respect to implementations, it does not describe the content of an XML document as a data structure, but as an abstract API allowing to programmatically interact with the document and its components.

However respectable that goal of neutrality, this choice was not suitable for many further specifications based on XML, which required more declarative descriptions of the structure of XML documents. Different such descriptions were therefore proposed (Clark and DeRose 1999; Cowan and Tobin 2004; Fernández et al. 2007), each of them based on a reading “between the lines” of the XML and DOM specifications, but each resulting to a formal model slightly different from the others.

We can see here how the ambiguity of the term “semantics” lead to some confusion. It would have been better to acknowledge from the start that XML does have a syntax and a semantics, and describe the latter (the DOM tree) more explicitly. Still, it could have been emphasized that this first level of interpretation didn’t impose any in-memory representation, nor did it preclude any further interpretation of the DOM tree itself. It may also have prevented this confusion to happen again, as has been the case with JSON (Crockford 2006), successor of XML in some respects. JSON is said now and then to have no semantics, for the same reasons that motivated that claim about XML. Conversely, languages with an explicit formal semantics (typically knowledge representation languages, such as RDF) are expected by some to have some inherent advantage over so-called semantic-less languages, that would allow them to “magically” capture the whole meaning intended by an author.

Syntax and interpretation¶

Furthermore, syntax and semantics are not always as orthogonal as it seems.

Fig. 6.1 An example parse tree, as produced by a generative grammar¶

First, for languages based on character strings, syntactic analysis is often split in two phases. The first one (lexical analysis) consists in grouping the characters into bigger units (tokens) that can be identified with simple rules (e.g. a sequence of letters and digits), and dropping other irrelevant characters (e.g. spaces and punctuation marks). It is therefore a first interpretation, transforming a character string into a sequence of tokens.

In the second phase, a generative grammar (Chomsky 1957; Crocker and Overell 2008) is used to hierarchically decompose that sequence, according to a number of rules. If this process fails, the sequence is considered syntactically invalid. It it succeeds, the result is a parse tree (as the one in Fig. 6.1). Hence that phase is also an interpretation, transforming a sequence of tokens into a labelled tree, whose structure will be used by further interpretations (starting with those defined by the language semantics).

Some grammars go even further in interpreting the data. XML-Schema (Fallside and Walmsley 2004) and Relax-NG (Clark 2002) are two standards for specifying grammars of XML-based languages. Both allow to specify a default value for attributes. This means that, in the end of the syntactic analysis, if the attribute was missing from the input XML tree, it will be considered as present and holding the default value. In other word, the syntactic analysis transforms a possibly incomplete sentence into a complete one.

With those examples, we see that what is called syntax is often much more than a simple binary criterion for distinguishing valid sentences from invalid ones. It is instead a first chain of interpretations. Conversely, any interpretation \(I\) on a language straightforwardly induces a sub-language, namely the set of sentences interpretable under \(I\) (its domain of definition).

Relation with model theory¶

Model theory (Hodges 2013) is the mathematical foundation on which the semantics of many knowledge representation languages, including first-order logic, is defined. It is based on a notion called “interpretation”, which is different from the notion of the same name we have defined above. To avoid confusion, we name MT-interpretations the interpretations defined by model theory.

More precisely, an MT-interpretation of a language \(L\) is a function \(I\) that maps the terms of \(L\) to the elements of a set \(Δ_I\), and assigns a truth value to the sentences of \(L\). We say that \(I\) satisfies a sentence \(s\), or that \(I\) is a model of \(s\), if and only if \(s\) is considered true under \(I\).

The semantics of a language is not defined by a specific interpretation, but by a set of rules constraining which MT-interpretations are relevant for that language. The semantic properties of a sentence \(s\) are therefore defined by the set of all its models: \(s\) is satisfiable if it has at least one model; \(s\) is a tautology if it is true under every possible interpretation; \(s\) is a consequence of another sentence \(s'\) if every model of \(s'\) is also a model of \(s\).

Example

Let us consider a language where terms are lower case letters,

upper case letters, and the character =.

Sentences are sequences of those characters.

Every MT-interpretation of that language maps:

to each lower case letter, an integer,

to each upper case letter, one of the operators +, -, × and /,

and satisfies a sentence if and only if, by replacing the letters with their interpretations, one gets an arithmetic expression which is both correct and true.

For example, the sentence \(s_1\): \(x A y = y A x = x B x\) has an infinite number of models, among which:

\(\{x→3, \;\; y→2, \;\; A→×, \;\; B→+\}\)

\(\{x→3, \;\; y→0, \;\; A→×, \;\; B→-\}\)

\(\{x→1, \;\; y→0, \;\; A→+, \;\; B→×\}\)

The sentence \(s_2\): \(x A y = x B x\) is satisfied by all models of \(s_1\) above, it is therefore a consequence of \(s_1\). Note that the opposite is not true, since

\(\{x→1, \;\; y→0, \;\; A→-, \;\; B→×\}\)

is a model of \(s_2\) but not of \(s_1\).

NB: for the sake of simplicity, we have only considered MT-interpretation whose domain was the set of integers. A more realistic example would have allowed MT-interpretations to have any domain \(Δ_I\). In that case, the constraints on MT-interpretation would have been to map

to each lower case letter, an element of \(Δ_I\),

to each upper case letter, a function \(f: Δ_I×Δ_I→Δ_I\),

and the condition for satisfying a sentence would have to be rephrased in a more general fashion.

In a way, model theory acknowledges ambivalence, as it allows multiple MT-interpretations of the same language to coexist. This makes languages defined that way very versatile, as they are not restricted to a single interpretation, not even to a single interpretation domain. The drawback is that, by refusing to favor one particular model over the others, model theory can only recognized what is true in all of them. Somehow, it conflates all the models into a single interpretation, which can be seen as their “greatest common divisor”. As a consequence, model theory is very likely to lose a part of the intended meaning of a language, and therefore should not be considered as the ultimate step in the interpretation process.

Summary¶

We have seen that the opposition between syntax and semantics is not a fruitful one, and that our notion of interpretation may provide a unified way to consider them. The multiple interpretations / representations of a sentence are linked together by interpretation functions defined at different levels. As an illustration, Fig. 6.2 shows the different languages and interpretation functions involved in interpreting an OWL ontology. Recall also that the meaning of a sentence is never unique, and that most language have several possible interpretations, which only the context allows us to chose. Fig. 6.3 gives an overview of this multiplicity through a few examples.

Fig. 6.2 Interpretation chain (nodes represent languages, edges represent interpretations)¶

Fig. 6.3 Interpretation graph (nodes represent languages, edges represent interpretations)¶

6.3. Congruence¶

As mentioned above, although any partial function from one language to another satisfies our definition of interpretation, this notion is only relevant for some of those functions. We propose that a function can be considered as an interpretation on a language as soon as it accounts for some transformation or processing performed on the sentences of this language, by relating it to a transformation or processing on the interpreted sentences. In order to capture this intuition, we need a formal description on how those transformations are effectively related by interpretations.

Notations¶

As we are considering partial functions \(f: L → L'\), we need notations for denoting the domain of definition and the range of such functions:

Definition¶

Let us consider two interpretation functions \(I_1: L_1 → L'_1\) et \(I_2 : L_2 → L'_2\). The languages \(L_1\) and \(L_2\) constitute the realm of representations, whereas the languages \(L'_1\) and \(L'_2\) constitute the realm of interpretations. The notion of congruence aims at capturing the fact that a function \(f: L_1 → L_2\) transforms representations in accordance with how a function \(f': L'_1 → L'_2\) transforms their interpretations.

For this, we define the notions of soundness and completeness3. Intuitively, \(f\) is sound with respect to \(f'\) under \((I_1,I_2)\) if every interpretable sentence computed by \(f\) corresponds to a sentence computed by \(f'\). Conversely, \(f\) is complete with respect to \(f'\) under \((I_1,I_2)\) if for every sentence (whose interpretation is) transformed by \(f'\), \(f\) computes the corresponding sentence. Formally:

Soundness and completeness are two forms of congruence, which we qualify as weak. When \(f\) is both sound and complete with respect to \(f'\) under \((I_1,I_2)\), we say that \(f\) is strongly congruent to \(f'\) under \((I_1,I_2)\). This conveys the idea that applying \(f\) to a representation amounts to apply \(f'\) to the corresponding interpretation. Fig. 6.4 proposes visual representations for soundness, completeness, strong congruence and unspecified congruence.

Fig. 6.4 Visual representation of congruence relations¶

Illustration¶

We illustrate here on an example the notions of congruence defined above. Let us consider the following languages and functions:

\(L_1 = L_2\) is the language of unicode strings of 10 characters or less \(𝕌^{10}\);

\(L'_1\) is the set of natural numbers \(ℕ\);

\(L'_2\) is the language of all sequences of natural numbers \(ℕ^*\);

\(I_1: 𝕌^{10} → ℕ\) interprets strings containing only digits as decimal representations of integers (e.g. \(\text{“42"}\)), even those containing spurious zeros (e.g. \(\text{“042"}\));

\(I_2: 𝕌^{10} → ℕ^*\) interprets strings containing only digits and spaces as sequences of integers, where spaces separate the items of the sequence, and digits are interpreted as in \(I_1\);

\(f': ℕ → ℕ^*\) is the function transforming any positive natural number into the ordered sequence of its prime divisors (without repetition). For example, \(f'(10) = (2,5)\) and \(f'(12) = f'(18) = (2,3)\).

Notice that the definitions of congruence makes no hypothesis about the four functions \(I_1, I_2, f\) and \(f'\). In particular, the functions defined above are

not total (i.e. partial): \(\text{“hello"}\) has no interpretation under \(I_1\) or \(I_2\), 0 has no image under \(f'\);

not injective: \(\text{“42"}\) and \(\text{“042"}\) have the same images under \(I_1\) and \(I_2\), \(f'(12) = f'(18) = (2,3)\);

not surjective: \(10^{10}\) has no representation under \(I_1\) as strings in \(𝕌^{10}\) are limited to 10 characters, \((2,3,5,7,11,13)\) has no representation under \(I_2\) for the same reason, \((5,2)\) is not an image of \(f'\) since \(f'\) produces ordered sequences.

We will now study what it means for a function \(f: 𝕌^{10} → 𝕌^{10}\) to be congruent with \(f'\) under \((I_1,I_2)\).

In order for \(f\) to be sound, every interpretable sentence it computes must correspond to a sentence computed by \(f'\). So we could not have, for example, \(f(\text{“12"}) = \text{“2 5"}\) or \(f(\text{“12"}) = \text{“3 2"}\) else \(f\) would not be sound; instead we must have \(f(\text{“12"}) = \text{“2 3"}\). By definition, the sentences for which \(f\) produces no output have no impact on soundness, so it is acceptable, for example, if \(f(\text{“4294967296"})\) is undefined4, even though \(f'(4294967296)\) exists. Still by definition, the sentences for which \(f\) produces a non-interpretable output have no impact either on soundness, so it is equally acceptable, for example, if \(f(\text{“4294967296"})=\text{“too big"}\). It follows that \(f\) could also be defined on non-interpretable sentences (for which \(f'\) can not possibly have a corresponding output), as long as its output is also non-interpretable. For example, it is acceptable if \(f(\text{“hello"})\) is undefined or returns \(\text{“error"}\), but not if it returns \(\text{“5"}\). Intuitively, this is because, in the latter case, the produced output could be mistaken for (the representation of) an output of \(f'\).

In order for \(f\) to be complete, for every interpretable sentence transformed by \(f'\), \(f\) must compute the corresponding sentence. Therefore it is not acceptable anymore for \(f(\text{“4294967296"})\) to be undefined, it should return the correct value (\(\text{“2"}\)). Note that, on the other hand, it is now acceptable if \(f(\text{“hello"})\) returns an interpretable sentence, such as \(\text{“5"}\), as completeness is only concerned with interpretable inputs. For the same reason, the fact that \(f'(10^{10}) = (2,5) = I_2(\text{“2 5"})\) is not reflected by \(f\), even though the result is representable, does not prevent \(f\) from being complete (nor sound). Intuitively, the notions of congruence are relative to the interpretations, and the fact that some inputs of \(f'\) have no representation under those interpretations should not be “held against” \(f\) for assessing its congruence.

Now, let us consider the case of \(f'(30030) = (2,3,5,7,11,13)\). The input sentence is representable, but the output is not (its representation would not fit the 10 characters limit). In order to be sound, \(f\) must be undefined on \(\text{“30030"}\), or return a non-interpretable sentence (e.g. \(\text{“too long"}\)). On the other hand, this case prevents any function \(f: 𝕌^{10} → 𝕌^{10}\) to be complete with respect to \(f'\) under \(I_1,I_2\). Completeness could however be achieved by extending \(L_2\) to accept longer strings.

Congruent predicates and relations¶

The notions of congruence we have just defined for functions can easily be extended to unary predicates and relations.

Considering two languages \(L\) and \(L'\), an interpretation function \(I: L → L'\), and two predicates \(P ⊆ L\) et \(P' ⊆ L'\), we define congruence relations as :

Those definitions are of course closely related to definitions (6.2) et (6.3). In fact, we can replace predicate \(P\) with a function \(f_P: L → \{\top\}\) mapping \(\top\) to all sentences of \(L\) verifying \(P\), and only to them (and respectievely for \(L'\) and \(P'\)). The congruence of \(P\) to \(P'\) under \(I\) is then equivalent to the congruence of \(f_P\) to \(f_{P'}\) under \((I, \text{id})\), where \(\text{id}\) is the identity function on \(\{\top\}\).

Extending this to binary or n-ary relations is straightforward, as \(L\) and \(L'\) could be defined as the cartesian product of several sub-languages \(L_i\) and \(L'_i\) respectively, and \(I\) as the combination of several interpretation functions \(I_i: L_i → L'_i\).

In the special case where \(L = L'\) and where the interpretation is the identity function, then those definitions can be simplified to:

Properties¶

We present here a number of notable properties of congruence relations.

In the following, we consider languages \(L_1, L_2, L_3, L'_1, L'_2, L'_3, L''_1, L''_2\), and functions

|

|

|

|

Symmetry¶

If \(f\) is sound with respect to \(f'\) under \((I_1, I_2)\), then \(I_1\) is complete with respect to \(I_2\) under \((f, f')\), and conversely.

In this equivalence, the transformation functions and the interpretation functions switch roles. As noted earlier, this may be relevant only with certain functions \(f\) and \(f'\) and in certain contexts.

Transivitivy¶

Fig. 6.5 Composition of congruence relations¶

Congruence properties are transitive through composition, either “horizontal” (i.e. applied to the congruent functions) or “vertical” (i.e. applied to the interpretation functions).

The “vertical” version of that property is of particular interest when considering interpretation chains (such as the one represented in Fig. 6.2).

Associativity¶

Fig. 6.6 Associativity of congruence relations¶

When one of the four functions involved in a congruence relation can be expressed as a function composition, it can be decomposed and recomposed with its “adjacent” function, while preserving the congruence properties.

As with the property of symmetry above, one of the function (\(g\) and \(f'\) in the definitions above) changes role, from transformation to interpretation or conversely. This demonstrates how this distinction is relative, and depends on the point of view.

Let us examine a special case where this property applies: when one of the four function is the identity function \(\text{id}\). Indeed, any function can be seen as the composition of itself with the identity function: \(f = f ∘ \text{id} = \text{id} ∘ f\). The equations above can, in that case, be rewritten as:

Inverse interpretations¶

If \(I_1\) and \(I_2\) are invertible, then we might wonder how congruence under \((I_1, I_2)\) affects congruence under \((I^-_1, I^-_2)\). This happens only if \(I_1\) and \(I_2\) have certain properties on the domains of \(f\) and \(f'\), respectively:

In particular, those properties are verified if \(I_1\) (respectively \(I_2\)) is a bijection between \(L_1\) and \(L'_1\) (respectively between \(L_2\) and \(L'_2\)).

Equivalence relations¶

Here we consider two relations \(R ⊆ L_1×L_1\) et \(R' ⊆ L'_1×L'_1\). We want to determine how congruence of \(R\) with respect to \(R'\) propagates the properties of an equivalence relation between \(R\) and \(R'\).

It can be shown that, if \(R\) is strongly congruent to \(R'\) under \(I_1×I_1\), then \(R\) is reflexive (respectively symmetric, transitive) on \(L_1\) if and only if \(R'\) is reflexive (respectively symmetric, transitive) on \(L'_1\)5.

Note that for every function \(f: L_1 → L_2\), we can define the relation \(\equiv_f\) on \(In(f)\) as :

By definition, this relation is strongly congruent with equality under \(f×f\), which satisfies the intuition between the notion of “equivalence relation”: \(x\) and \(y\) are equivalent as they can be interpreted as equal (according to \(f\)).

Connection with mathematical logic¶

It has probably not eluded the logically inclined reader that the terms “sound” and “complete” are borrowed from mathematical logic. This is because the notions of soundness and completeness in this field are a special case of the notions proposed here: consider \(L\) the set of valid formulae of a formal system \(S\), \(P\) the predicate \(⊦\) indicating that a sentence is derivable in \(S\), and \(P'\) the predicate \(⊧\) indicating that a sentence is a tautology. Then the equations (6.5) above coincide with the classical notions of soundness and completeness, becoming:

Following the steps of Hofstadter (1979), we can also rephrase Gödel’s first incompleteness theorem in our framework6. For any (sufficiently expressive) formal system \(S\) on a language \(L\), there exist:

an unambiguous (invertible) representation of every sentence \(s\) of \(L\) by a natural number \(G(s)\) – or conversely, an interpretation function \(G^-: ℕ → L\);

a certain computation \(C\) such that, for any sentence \(s\), \(C(G(s))\) verifies that \(s\) is derivable in \(S\) – in other words, \(C\) (considered as a predicate on \(ℕ\)) is strongly congruent with \(⊦\) under \(G^-\);

a number \(ɣ\) such that the sentence \(¬C(ɣ)\) is represented by \(ɣ\) itself through \(G\).

The last point is the cornerstone of Gödel’s proof: the sentence \(G^-(ɣ)\) states “ɣ does not satisfy \(C\)”, which can also be interpreted as “\(G^-(ɣ)\) can not be derived”. Unless the system was inconsistent, neither the sentence or its negation can be derived in that formal system (Raatikainen 2015), it is undecidable.

The ambivalence is key in this famous result: it uses the fact that the sentence \(G^-(ɣ)\), known as the Gödel sentence of the system \(S\), can be read at two different levels (a statement about the number \(ɣ\), and a statement about itself). But, as Hofstadter points out, it would be naive to think of any of these statements as the correct or final interpretation of that sentence. Although one may be tempted to get rid of the undecidability by adding the Gödel sentence as an axiom, there will still be infinitely many interpretations on \(L\), and only one of them would be “cured” in the process. In other words, there would still be another sentence in \(L\) which, according to another interpretation, would be a Gödel sentence for the new system.

6.4. Ambivalence¶

This notion of congruence provides us with a theoretical and formal framework for apprehending ambivalence. Considering that any functionality of a computer system can be reduced to a function transforming data, then any interpretation of those data allowing to justify or explain that transformation (in terms of congruence to another transformation) can be deemed relevant, even if it differs from the original intent of the system.

Software development¶

In it simplest form, developing (a functionality of) a software application amounts to implement a function \(p\) (the program) that is congruent to a function \(s\) (the specification). The program works on digital representations, while the specification can be defined at an arbitrary level of abstraction. The interpretation functions allowing to cross those abstraction levels, and therefore to establish the congruence of \(p\) to \(s\), depend on the development and execution environments. Ultimately, the computer handles bits, which are interpreted by the machine language as integers or floating point numbers. In C, those can be further interpreted as ASCII characters, or composed into more complex structures (arrays, structured types). Additional libraries may provide further interpretation layers: a geometry library may consider arrays as points or vectors; an XML library may consider character strings as DOM trees.

Note that the example interpretations listed above are of two kinds. Some of them are purely conventional, where the same data in memory is considered differently (e.g. bits, number, character) by different parts of the code. Others involve an actual rewriting of the data to support the interpretation: this is usually the case for XML libraries, where the DOM tree is “materialized” into a dedicated data structure through a parsing process. This latter example illustrates again how the distinction between transformation and interpretation is contextual.

It follows that, as soon as the specification is expressed beyond the abstraction level provided by the environment, the developer’s work is not anymore reduced to implementing \(p\). It also consists in inventing new interpretation functions justifying the desired congruence, either as new conventions, or as new data structures with their associated parsing functions. Those considerations are of course not new: software engineering methodologies have long identified the need to iteratively decompose an abstract specification in order to implement it. The object-oriented paradigm (Meyer 1997), in particular, emphasizes this approach. Furthermore, the most useful and reusable abstractions have been identified and formalized as design patterns (Gamma et al. 1995), or even integrated to programming languages (such as strongly object-oriented programming languages).

As valuable as this trend may have been, helping developers to reuse proven and largely understood abstractions, it also lead to a rigidification of practices, to which agile development methods can be seen as a reaction. Those methods endorse the fact that applications provide unplanned affordances, and end up being misused, adapted or diverted, in other words interpreted in multiple ways, different from the originally intended interpretation. By insisting on continuous delivery, they allow development teams to identify those alternative interpretations early on, and to adjust to them. Refactoring, another important component of agile development, can be seen as the process of changing data and program structures (as a result of altering interpretations) while preserving the congruence of the program with the intended specification.

Program and data¶

We have proposed above a point of view on refactoring where the program is consider as a function. Another way to look at it is to consider the program as a sentence (in a programming language) interpretable according to a given interpretation function: the standard semantics of the programming language, specifying the behaviour that each program must have. With this point of view, refactoring can be seen as a transformation of program-sentences, which has to be congruent with identity under the standard semantics (i.e. to preserve the behaviour).

This duality between program and data has long been recognized, even though the distinction is often posed as a working hypothesis for practical reasons. For example, in a Turing machine, the program (i.e. the set of rules specifying the behaviour of the machine) is “embedded” in the machine and static, while the data stored on the tape are modifiable. This distinction is also present in classical computer architecture, where the memory used by a process is divided into a code segment, usually read-only, containing the executable machine code of the program, and a writable data segment, containing the data handled by that program.

Still, the boundary between the two is relative. Turing (1936) proved the existence of a universal machine, able to simulate any other Turing machine described on its tape. Similarly, many programming languages nowadays are interpreted7. In those cases, the program segment only contains the interpreter’s code (a kind of universal machine) while the application program itself is stored in the data segment with its own data, suggesting a threefold partition (interpreter-program-data) instead of the initial twofold partition. Then some libraries also make use of so-called domain-specific languages, or mini-languages (Raymond 2003, chap. 8), promoting a part of the data to yet another level of “program-ness”8.

In Artificial Intelligence, the classical program-data distinction has also been largely questioned, in search of better alternatives. Knowledge Based Systems can be seen as such an alternative, distinguishing a generic reasoning engine from a domain-specific knowledge-base. Further partitions of the knowledge base itself have also been proposed: rules and facts in Prolog, T-box and A-box in description logics (Baader et al. 2003)… We see that different users (e.g. system administrator, developer, knowledge engineer, end user) will have different points of view on which part of the information in the computer’s memory is being executed, and which part is being merely processed by the former.

Self-rewriting programs, and other kinds of meta-programming, muddy the waters a little more regarding the program-data distinction. Arsac (1988) testifies to such practices as soon as 1965. It is interesting to notice that he calls them “instruction computation”, which highlights the different abstraction levels at work: the semantics of the program (instructions with an intended behaviour) and its representation in memory (numbers produced by computation). It is even more interesting that, according to Arsac, some people contested that designation, arguing that not all computations were relevant for instructions – which could be restated in our framework: not all computations are congruent to a meaningful transformation of instructions.

Representations and intelligibility¶

The user of a computer system apprehends the underlying data through their perceivable representation(s) offered by the system. More precisely, the system internally processes sentences of a language \(L_i\), and transforms them into a language \(L_p\) perceivable by the user (e.g. textual, graphical, audible). Both languages aim to represent conceptual structures targeted by the system, sentences of a language \(L_c\). Therefore, there are interpretation functions \(I_i: L_i → L_c\) and \(I_p: L_p → L_p\), and the presentation function \(p: L_i → L_p\) must be strongly congruent to the identity function on \(L_c\).

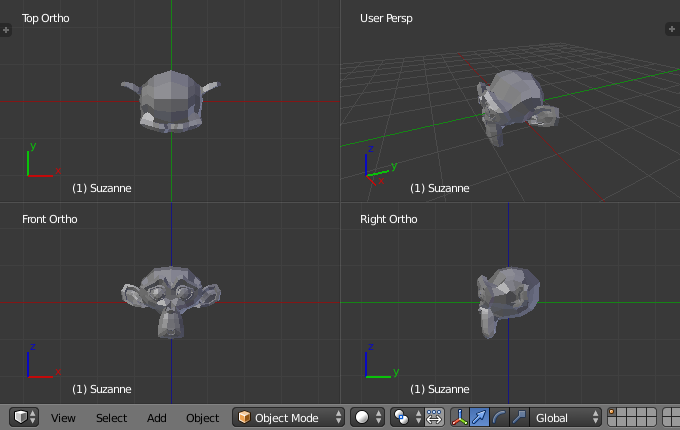

The requirement for \(p\) to be congruent to the identity, rather than to some kind of projection, may seem too strong. Indeed, complex structures are often better apprehended through several partial but complementary representations than through a single exhaustive one, as can be seen in the interface of Advene (see Fig. 5.1) or of 3D modelling applications (see Fig. 6.7). Furthermore, in many applications, the user is never presented with the whole information at once, but has access to it by browsing from one partial representation to another. We can however justify that constraint as follows. First, we consider a function \(p\) returning the whole set of partial representations available to the user (rather than those effectively rendered at a given time). Second, we consider that if any information was totally invisible to the user, including through their interactions with the system, then we can as well assume that this information is absent from the system.

We can then remark that the user may interpret the representations available to them differently from the interpretation \(I_p\), intended by the system designers, which we may call the canonical interpretation, as a reference to Prié (2011). Some social codes (typographical conventions, standard GUI patterns, etc.) may limit this divergence, but can rarely prevent it entirely. Prié also notices that presentation can induce in the user’s interpretation some structures that do not exist in the underlying data. For example, in a word processing application, the fact that two characters are vertically aligned in a paragraph is an effect of presentation, without any counterpart in the data. However, the user may integrate this in their interpretation, and exploit it in their practice (in order, for example, to create a graphical effect in the text). Similarly, the user apprehends the functionalities of the system through presentation. Every operation on the data reflects on their presentation, and if the perceived change is congruent with the functionality assumed by the user, this will confirm their interpretation. On the other hand, a mismatch will lead them to revise their interpretation – or to consider that the system is not working properly10. Following our example above, the user may be frustrated that copying and pasting the paragraph on a page with a different width does not preserve the vertical alignment of its characters.

Therefore, there is a tension in the design of a presentation. Specific presentations help the user conceive an appropriate interpretation (i.e. close enough to the canonical interpretation), and provide meaningful affordances to the system’s functionalities. Generic presentations, on the other hand, are less helpful at first, but allow the user to adapt the system to their own interpretations and use cases, even if these diverge slightly from the ones originally intended by the system designers.

The aim of this chapter was to try and elicit a number of intuitions that drove most of the works presented in this dissertation. The proposed formal framework offers an alternative approach to traditional notions of semantics. In this framework, interpretations are not considered only as a prerequisite to standard processings (although they can still be used that way), but can also become retrospective justification of ad-hoc processings. Semantics is therefore open-ended, potential interpretations being never exhausted. Work remains to be done, however, to make this framework operational, and provide effective guidelines to build ambivalence-aware systems.

Notes

- 1

NB: if \(I\) is not injective, then the inverse relation is not itself a function, and \(y\) can have several representations under \(I\).

- 2

Actually, XML distinguishes two levels of compliance, well-formedness and validity, but both are syntactic criteria.

- 3

Those terms are borrowed from mathematical logic; we will show in the end of this section that the definitions we give here are a generalization of their usual definitions.

- 4

This could be the case if \(f\) was a computer program using 32-bits integers.

- 5

In fact, it is enough if \(R\) (respectively \(R'\)) has those properties on \(In(I_1)\) (respectively \(Out(I_1)\)). They do not have to be verified on the whole of \(L_1\) or \(L'_1\).

- 6

Note that this incompleteness is not the opposite of \(complete(⊦, ⊧)\), but of a subtly different notion of completeness. Namely, a formal system \(S\) on a language \(L\) is complete if every sentence of \(L\) or its negation can be derived in \(S\). In other words, if \(S\) is incomplete in that sense, some sentences do not have the same truth value in all possible models.

- 7

This includes languages such as Java and C#. Although those languages require a compilation phase, they are not compiled into native machine code, but to a lower-level language (bytecode) that still needs an external native program to be executed. So strictly speaking, this lower-level language is an interpreted language.

- 8

This is humourously summarized by Greenspun’s Tenth Rule of Programming: “Any sufficiently complicated C or Fortran program contains an ad-hoc, informally-specified bug-ridden slow implementation of half of Common Lisp” (http://philip.greenspun.com/research/). Greenspun’s intent here is cleary to encourage to use high-level languages (such as Common Lisp) instead of re-implementing their functionalities in C or Fortran. But this aphorism also suggests that one could consider a part of the low-level program as an interpreter, and the other part as a higher-level program interpreted by the former.

- 9

https://www.blender.org/manual/editors/3dview/navigate/3d_view.html

- 10

In which case they might be opposed the famous meme “it is not a bug, it is a feature”, which we could rephrase: “if the system is not congruent under your interpretation, then your interpretation is wrong, not the system”.

Chapter bibliography

Arsac, Jacques. 1988. “Des Ordinateurs à l’informatique (1952-1972).” In , edited by Philippe Chatelin, 1:31–43. Grenoble. http://jacques-andre.fr/chi/chi88/arsac.html.

Baader, Franz, Diego Calvanese, Deborah L. McGuinness, Daniele Nardi, and Peter F. Patel-Schneider, eds. 2003. The Description Logic Handbook: Theory, Implementation, and Applications. Cambridge University Press.

Bray, Tim, Jean Paoli, C. M. Sperberg-McQueen, Eve Maler, and François Yergeau. 1998. “Extensible Markup Language (XML) 1.0.” W3C Recommendation. W3C. http://www.w3.org/TR/1998/REC-xml-19980210.

———. 2008. “Extensible Markup Language (XML) 1.0 (Fifth Edition).” W3C Recommendation. W3C. http://www.w3.org/TR/xml/.

Chomsky, Noam. 1957. Syntactic Structures. Mouton.

Clark, James. 2002. “RELAX NG Compact Syntax.” OASIS Committee Specification. OASIS. http://www.oasis-open.org/committees/relax-ng/compact-20021121.html.

Clark, James, and Steve DeRose. 1999. “XML Path Language (XPath).” W3C Recommendation. W3C. http://www.w3.org/TR/xpath/.

Cowan, John, and Richard Tobin. 2004. “XML Information Set (Second Edition).” W3C Recommendation. W3C. http://www.w3.org/TR/xml-infoset/.

Crocker, David, and Paul Overell. 2008. “Augmented BNF for Syntax Specifications: ABNF.” RFC 5234. IETF. ftp://ftp.rfc-editor.org/in-notes/std/std68.txt.

Crockford, Douglas. 2006. “The Application/Json Media Type for JavaScript Object Notation (JSON).” RFC 4627. IETF. http://www.ietf.org/rfc/rfc4627.txt.

Fallside, David C., and Priscilla Walmsley. 2004. “XML Schema Part 0: Primer Second Edition.” W3C Recommendation. W3C. http://www.w3.org/TR/xmlschema-0/.

Fernández, Mary, Ashok Malhotra, Jonathan Marsh, Marton Nagy, and Norman Walsh. 2007. “XQuery 1.0 and XPath 2.0 Data Model (XDM).” W3C Recommendation. W3C. http://www.w3.org/TR/xpath-datamodel/.

Gamma, Erich, Richard Helm, Ralph Johnson, and John Vlissides. 1995. Design Patterns: Elements of Reusable Object-Oriented Software. Reading, Mass.: Addison-Wesley.

Hodges, Wilfrid. 2013. “Model Theory.” In The Stanford Encyclopedia of Philosophy, edited by Edward N. Zalta, Fall 2013. http://plato.stanford.edu/archives/fall2013/entries/model-theory/.

Hofstadter, Douglas R. 1979. Gödel, Escher, Bach: An Eternal Golden Braid. New York: Basic Books.

Lauren Wood. 1998. “Document Object Model (DOM) Level 1 Specification.” W3C Recommendation. W3C. http://www.w3.org/TR/REC-DOM-Level-1/.

Meyer, Bertrand. 1997. Object-Oriented Software Construction, Second Edition. Prentice Hall.

Nivat, Maurice, and Andreas Podelski. 1992. Tree Automata and Languages. Amsterdam; New York: North-Hollandi. http://books.google.com/books?id=x91QAAAAMAAJ.

Pemberton, Steven. 2000. “XHTML 1.0 The Extensible HyperText Markup Language (Second Edition).” W3C Recommendation. W3C. https://www.w3.org/TR/xhtml1/.

Prié, Yannick. 2011. “Vers une phénoménologie des inscriptions numériques. Dynamique de l’activité et des structures informationnelles dans les systèmes d’interprétation.” Thesis, Université Claude Bernard - Lyon I. https://tel.archives-ouvertes.fr/tel-00655574/document.

Raatikainen, Panu. 2015. “Gödel’s Incompleteness Theorems.” In The Stanford Encyclopedia of Philosophy, edited by Edward N. Zalta, Spring 2015. http://plato.stanford.edu/archives/spr2015/entries/goedel-incompleteness/.

Raymond, E. S. 2003. The Art of Unix Programming. Pearson Education.

Rozenberg, Grzegorz, ed. 1997. Handbook of Graph Grammars and Computing by Graph Transformation. Singapore ; New Jersey: World Scientific.

Schreiber, Guss, and Yves Raimond. 2014. “RDF 1.1 Primer.” W3C Working Group Note. W3C. http://www.w3.org/TR/rdf-primer/.

The Unicode Consortium. 2016. The Unicode Standard, Version 9.0.0. Mountain View, CA: The Unicode Consortium. http://www.unicode.org/versions/Unicode9.0.0/.

Turing, Alan M. 1936. “On Computable Numbers, with an Application to the Entscheidungsproblem.” J. of Math 58 (345–363): 5.