Theoretical Computer Science

volume 811, pages 42-55 (2020)

doi:10.1016/j.tcs.2019.01.023

Invited paper for SIROCCO 2017.

Theoretical Computer Science

volume 811, pages 42-55 (2020)

doi:10.1016/j.tcs.2019.01.023

Invited paper for SIROCCO 2017.

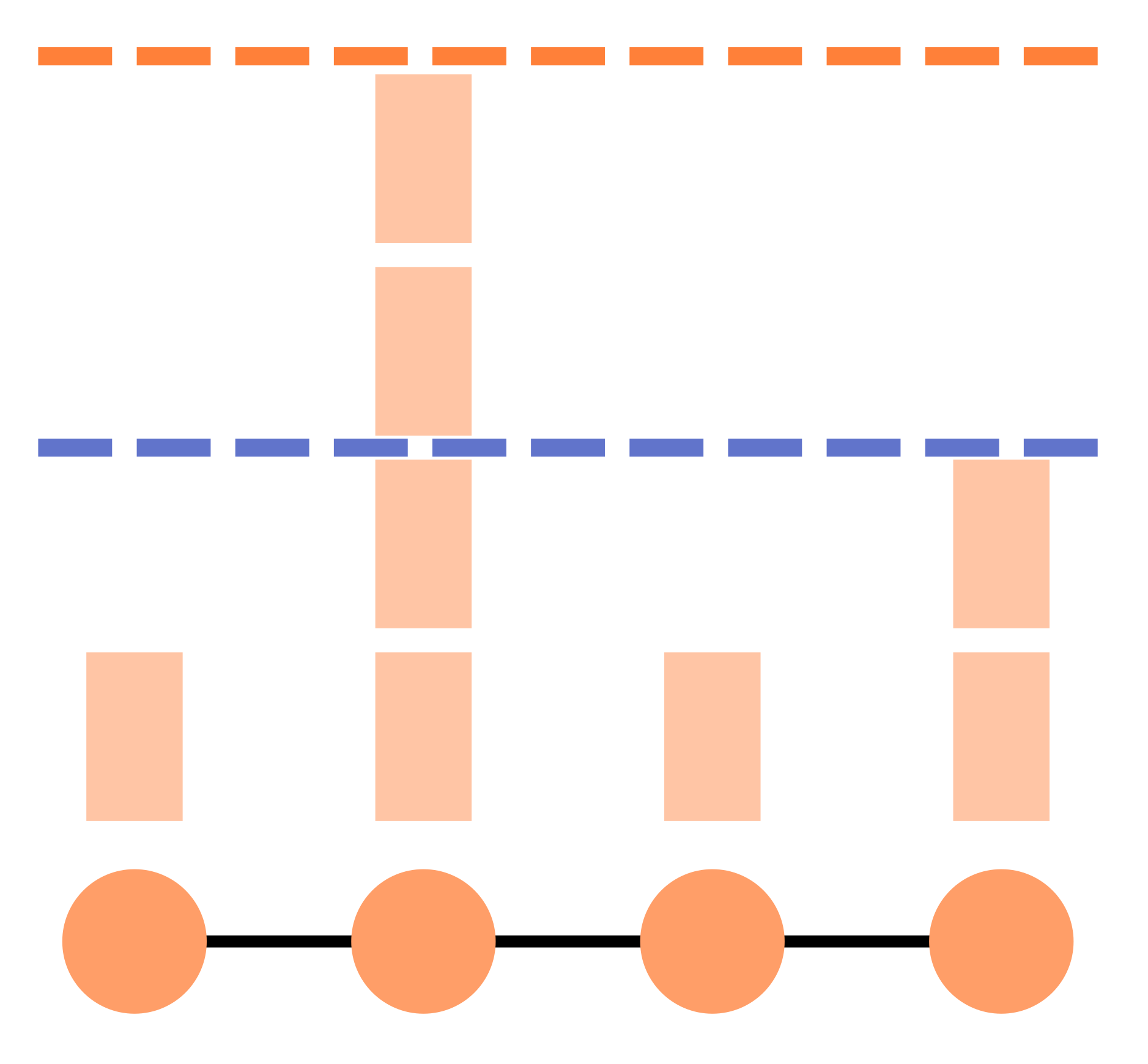

In the context of distributed synchronous computing, processors perform in rounds, and the time-complexity of a distributed algorithm is classically defined as the number of rounds before all computing nodes have output. Hence, this complexity measure captures the running time of the slowest node(s). In this paper, we are interested in the running time of the ordinary nodes, to be compared with the running time of the slowest nodes. The node-averaged time-complexity of a distributed algorithm on a given instance is defined as the average, taken over every node of the instance, of the number of rounds before that node output. We compare the node-averaged time-complexity with the classical one in the standard LOCAL model for distributed network computing. We show that there can be an exponential gap between the node-averaged time-complexity and the classical time-complexity, as witnessed by, e.g., leader election. Our first main result is a positive one, stating that, in fact, the two time-complexities behave the same for a large class of problems on very sparse graphs. In particular, we show that, for LCL problems on cycles, the node-averaged time complexity is of the same order of magnitude as the ``slowest node'' time-complexity.

In addition, in the LOCAL model, the time-complexity is computed as a worst case over all possible identity assignments to the nodes of the network. In this paper, we also investigate the ID-averaged time-complexity, when the number of rounds is averaged over all possible identity assignments. Our second main result is that the ID-averaged time-complexity is essentially the same as the expected time-complexity of randomized algorithms (where the expectation is taken over all possible random bits used by the nodes, and the number of rounds is measured for the worst-case identity assignment).

Finally, we study the node-averaged ID-averaged time-complexity. We show that 3-colouring the $n$-node ring requires $\Theta(\log^*n)$ rounds if the number of rounds is averaged over the nodes, or if the number of rounds is averaged over the identity assignments. In contrast, we show that 3-colouring the ring requires only $O(1)$ rounds if the number of rounds is averaged over the nodes, and over the identity assignments.

A preliminary version of this work appeared as a brief announcement at PODC 2015. See this page.

A second version of this paper appeared as a conference paper at SIROCCO 2017. See here. The main differences, in content, between the BA and the conference version are a generalization of the lower bound for the average on the nodes, and the addition of a part about average on the IDs. There is a error in this version in the randomized part. We describe this error in a later paragraph of these notes.

The current version is the one published in Theoretical Computer Science special issue for the conference. In addition to correcting the error of the conference version, it contains the few proofs that were omitted in the conference version, and more reader-friendly versions of the main proofs.

The error in the conference version is in Theorem 3. This theorem does not hold in general. In the proof, the step where the randomized algorithm simulates the deterministic one requires some nodes (the ones that see twice the same ID) to wait until they see the whole graph and then to choose a proper output to complete the current output. This is not possible in general, and requires an additional hypothesis: the language must be completable. See the journal version for more details.

A recent paper by Barenboim and Tzur, Distributed Symmetry-Breaking with Improved Vertex-Averaged Complexity (ICDCN 2019), shows (among other results) that there is an exponential gap between worst-node and average-node complexity in general graphs for a colouring problem.

In a PODC 2020 paper, Sleeping is Efficient: MIS in O(1)-rounds Node-averaged Awake Complexity Chatterjee, Gmyr, and Pandurangan considered a similar model, and proved that it is possible to design an MIS algorithm where every node in average is active only a constant number of rounds.

In a PODC 2022 paper, Node and Edge Averaged Complexities of Local Graph Problems Balliu, Ghaffari, Kuhn and Olivetti prove among other things that the KMW lower bound holds for the average measure, which implies results on the worst-case complexity too.